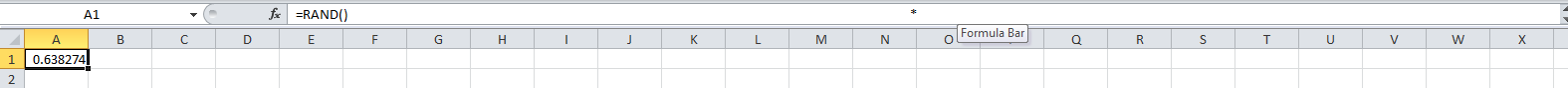

This isn’t a particularly high-tech post, but it helped me out in tricking innocent bystanders of a cell’s true intention. Suppose you are using Excel and want to show someone a nonsensical output from a cell evaluation. You type in something innocuous, like =rand(), which should give a random number between 0 and 1. If you want the “random” number to always be between .5 and .75, however, you could type =rand()*.25+.5. In my case, I wanted to show someone the equation “=rand()” but the output from “=rand()*.25+.5”, so that whenever I updated, it would give a number between .5 and .75. The observer would be confused and hilarity would ensue as the “random” number always falls between .5 and .75. To do this, type “=rand()” at the far left of the cell equation box, like normal. Then put a bunch of spaces until you get to the middle of the equation box, and put “*”. Then put more spaces until you are off the initial screen and type the rest of the equation “.25+.5”. Now, when the equation is viewed, the observer will only see the “=rand()”, unless they are looking very closely and notice the odd multiplication sign in the middle of the line. In my experiments, I have found that Excel will delete your excessive spaces if you only put “=rand()” on the far left and “*.25+.5” off the screen. For some reason, the spaces are not deleted if you type something in the middle of the equation box. Use this information as you will.

Category Archives: Programming

Code Monkey Monday- Transferring a MySQL Database with MySQL Workbench

I have a desktop work computer and a home laptop. For a recent project, I needed access to the same MySQL database from both computers, as I worked on the project at both locations. The database was hosted locally on my work computer. One option would be to VPN to my work computer, but that wasn’t an attractive option for various reasons. I decided that I wanted the database hosted on both computers locally.

To move a MySQL database to another computer,

1. Open MySQL Workbench on the computer currently hosting the database.

2. Goto Server -> Data Export in the menu

3. Select the database you want to copy, and select a destination in the “Export to Self-Contained File” field.

4. Click Start Export

5. Once done exporting, transfer the dump file to the other computer

6. Open MySQL Workbench on the second computer

7. Goto Server -> Data Import in the menu

8. Select “Import from Self-Contained File” and the dump file just transferred.

9. Click Start Import.

You should be good to go. Copying multiple databases or giant databases might be trickier, but this works in most cases.

Code Monkey Monday- Math Mode in Microsoft Word

If you write in LaTeX (or can learn), you’re well-equipped to write mathematical equations in newer versions of Microsoft Word. I have Word 2010 installed on my computer. To enter math mode, hold down the Alt key and hit the “=” key. Math mode accepts LaTeX-like formulas. It is slightly better than LaTeX as well, because you can see exactly how your equation will look as you type it. Whenever you’ve finished typing a complicated symbol or function and want it to display, just hit spacebar. You can click outside the math mode box or type Alt+= again to exit math mode.

Here’s an example. In math mode, type “\int_0^24 \lambda(t) dt” to get an integral that looks like . This saves you from having to find and click on all the suggested symbols in Word to get an equation you want.

Thanks to Alex Mills for this suggestion.

Remember that you can also use LaTeX in WordPress blog posts.

Code Monkey Monday- Code Monkey Anthem

Code Monkey, by Jonathan Coulton

Very catchy. Very appropriate. Very much the anthem to my Monday morning posts.

Code Monkey Monday- Transferring an Access Database to MySQL

Why are you using Access? It’s a pain in the butt and I don’t like it. Transfer your database to MySQL for easier compatibility with… everything.

I had to make this transition a few weeks ago, and here’s what I did. Remember that I am not a database expert, but this worked for me.

1. Download MySQL on your computer. Click on the Enterprise Edition link here. Oracle apparently controls MySQL nowadays, so sign in or register with them to get to the download. You can insert fake data in every data field for the registration except the email address. Use a real email address so you can confirm your registration. Once you get to the download page in Oracle, select MySQL and your computer type (i.e. Windows 64 bit). Input that query, then download the “MySQL Installer 5.6.20.0 Package” (or whatever version they’re currently on).

2. Run the installer .msi. Install the “Developer Default” setting unless you know any better. When you start installing, MySQL will probably tell you that you’re missing some pre-requisite programs. Just install them through MySQL like it wants you to. Install the program. After the install, there will be some configuration. On the “MySQL Server Configuration” page, I’d leave the defaults (Port=3306). On the next page, you’ll have to specify a password. Remember that password for future use. I’d leave the defaults on the other pages as well, unless you want to uncheck the box for starting MySQL Server at computer startup.

3. Download the “Access to MySQL” tool from here. The download link is at the top of the page.

4. Install the Access to MySQL program.

5. If your computer is like mine, when you try to run the Access to MySQL program, a box will pop up and complain about drivers. It will say you can only create dump files instead of doing the transition in one step. Whatever, just continue.

6. Click “Next” on the first page. In the Filename field of the second page, input the path to the .mdb file of your Access database. If you have security settings (username/password), enter those. On the third page, create a dump file (probably your only option). Type the name that you want the MySQL database to have and the path that you want the dump file saved at. Click “Next” or “Run” on any remaining pages. This will create a dump file of all of your tables in the Access database that you specified. Now we’ll import that dump file into MySQL.

7. Open MySQL Workbench. Double click on your “Local instance MySQL56” box on the front page. Enter your password that you just specified during installation.

8. Goto Server->Data Import. Select the bubble for “Import from Self-Contained File” and enter the path to your dump file. Click Start Import at the bottom.

9. If all went well, your Access database will now be available in MySQL under the database name that you specified in step 6.

You can now query your database from Python using my instructions from last week.

Code Monkey Monday- Querying a MySQL Database with Python

1. Set up a MySQL Database on your computer.

2. Install the Python library MySQL-python from http://www.lfd.uci.edu/~gohlke/pythonlibs/

3. In your code, add the import statement “import MySQLdb” at the top.

4. Find the database host, username, password, and database of interest.

5. Set up a database connection in Python with a line similar to

“db_connection = MySQLdb.connect(host=’localhost’, user=’root’, passwd=’FluffyBunnies1234′, db=’MyAwesomeDatabase’)”

Here, my host is “localhost” because the database is stored locally on my desktop at work. I access the database from the “root” user account. You can also connect to external databases as long as you frame the connection string properly.

6. Set up a database cursor that you will use to execute queries. “cursor = db_connection.cursor()”

7. Structure up your SQL query. The query should be a string. All white space is treated equivalently, so you can have a space or a newline between parts of the SQL. Example “SQL = SELECT alpha, beta FROM parameter_table WHERE alpha>.5 ORDER BY beta ASC”.

8. Execute the query with the cursor. “cursor.execute(SQL)”

9. Fetch the output. “output = cursor.fetchall()”. If your query will return too many results to grab all at once, you can instead use the function fetchone() instead.

10. Your “output” variable will be a list of lists, where each inner list represents one line returned by query and each element of the inner list represents a column referenced in the SELECT statement. If my query above returned 500 parameter combinations, then I would have a list of 500 lists, where each of the 500 inner lists has two elements: alpha and beta.

Hope this gets you started. For a full documentation of the MySQLdb class, you could start here. Pay attention to make sure you’re reading the parts about the MySQLdb wrapper.

Code Monkey Monday- Notepad++

If you like to read data in from text files or save lots of data to text files, you’ve probably discovered that Window’s default text reading program, Notepad, sucks. It can’t open large (MB or larger) text files, sucks at formatting, and seems slow. I prefer the freely downloaded program Notepad++. It can handle large files with ease, allows multiple text files to be open in the same program, highlights all equivalent words if a word is highlighted, gives line numbers, and probably has a ton of other capabilities that I’m not familiar with. You should upgrade to Notepad++.

Code Monkey Monday- Exec(uting) and Eval(uating) Strings in Python

You want to include a little bit of dark magic in your Python coding, right? Well, read on.

More times than I care to admit, I have situations in which I want my code to write more code for me. Because either I am lazy or I need to be tricky. A recent example: I needed to create and populate a list of lists for each week of the NFL season to contain some of my analysis results. With normal coding, I saw two options: copy paste my code 21 times (17 regular season weeks and 4 playoff weeks) or create a confusing list of list of lists of results. Because the analysis was consistent over the weeks, there was a third option that I preferred: using the exec() and eval() statements.

exec(string) takes a string and executes it like it was a full line of code. So exec(“list”+str(week)+”=[]”) would execute different things depending on the value of week:

If week==1, the line would be: list1=[]

If week==2, the line would be: list2=[]

etc. It basically substitutes the value of week into the string and then executes what the string says.

By cycling through the weeks of the season, I created an empty list for each week using exec:

for week in weeks:

exec(“list”+str(week)+”=[]”)

2 lines of code to create 21 lists instead of 21.

I later populated the list with something like: exec(“list”+str(week)+”.append(results)”)

The eval(string) turns the string into code and then allows it to be used in a larger code statement. For example: output+=eval(“list”+week+”[i]”)

This creates the code line

output+=list1[i]

if week==1. In this way, I can add the i’th component of each week’s list to some output string that I saved.

There are more exotic uses of exec() and eval() that I’ve used in the past. Someday I’ll write about how we created code to parse and evaluate any Google Protocol Buffer (kind of like xml) at Booz Allen.

Disclaimer: exec() and eval() may not be “good practice” for a professional coder, but I’m not a professional coder and just create code that works for me.

Code Monkey Monday- Keeping Excel from Auto-updating

Quick tip: When you use the RAND function in Excel to generate a random number, the number tends to update everytime you change something in Excel. To stop the auto-updating, copy the random number(s), and then re-paste them into the same cell(s) using Paste Special -> Values. This will save the random value and get rid of the auto-updating random function.

How to bulk download Batter vs. Pitcher Data

I worked on a project in a Complex Systems class where I wanted to know if there was any value in looking at the network of at-bats in baseball. To create this network, I assumed that if a batter got on base via hit, HBP, or walk, the batter won the at-bat and I drew a link from the pitcher to the batter. If the pitcher got the batter out, I said the pitcher won the at-bat and drew a link from the batter to the pitcher in the network. This created a directed graph that I could run networked statistics on, such as PageRank. I wanted to know how well the rank of a player in summary statistics (ERA, AVG, OBP, WAR, etc) matched up with the rank of the player in PageRank. PageRank, in this context, puts value upon beating other players with high PageRank. So, when playing the Dodgers, getting a hit off of Clayton Kershaw last year was worth more than getting a hit off of Chris Capuano. Did good players overperform or underperform against other good players? Does this have value for predicting playoff success? These were some of my questions as I started my study.

To get results of batter/pitcher matchups, I crawled Baseball-Reference.com. Their Play Index Tool lets you look up the results of any players’ batting/pitching matchups, possibly filtered by year. I wanted to download every at-bat for the year 2013.

To begin with, I’m not sure Baseball-Reference.com wanted me to crawl their records. They have disclaimers against this sort of bulk downloading, but I was using the data for a personal project and didn’t profit from it, so I went ahead. They didn’t kick off my IP as I went about crawling/downloading these matchups.

My code for this project is in Python. I used the screen-scraping package Beautiful Soup.

I first had to grab the usernames for all players in the majors in 2013. I went to this page to get the batters and this page to get the pitchers. Looking at the page source for the pitching page, you notice that the usernames start around line 1727. Download the page source for those pages and use some logic to grab all the usernames for pitchers and batters. Here is my ugly code to parse the usernames.

Once you have the usernames, you’ll want to crawl Baseball-Reference.com to get matchup data from every batter. Unfortunately, a batter’s matchup data (like this for Barry Larkin) creates the same page source whether you filter by year or not. Filtering by year only dynamically changes what is shown on the screen; it doesn’t change the page source, which is what we are going to crawl. So we have to use three steps to get only 2013 data:

-Parse the page source for a batter’s alltime matchups to see which pitchers he ever faced

-For each pitcher, see if that pitcher is in the list of 2013 pitchers

-If it is, crawl ‘http://www.baseball-reference.com/play-index/batter_vs_pitcher.cgi?batter=’+batter+’&pitcher=’+pitcher to get the line related to 2013. Add this line to your statistics that you are keeping.

Here is my Python code to download all at-bats from 2013. You’ll notice that I import urlopen from urllib2 to tell Python to open the webpages of interest. Then I use Beautiful Soup to parse the page source. Throughout the code, I added in lines like “time.sleep(random.random()*10)” from the time package to make the code delay a random amount of time. This kept me from overloading Baseball-Reference.com with requests and hopefully kept me from pissing them off. If you’re interested in using the code, note that you’ll obviously need to change your input/output folders to match your computer.

Hope this helps. I know it’s not 100% complete in its description, but post in the comments if you’re confused in some way.